OpenStack Fundamentals: Membangun Private Cloud dari Nol

OpenStack adalah platform IaaS open source yang digunakan untuk membangun private cloud. Blog ini membahas arsitektur, komponen inti, cara instalasi, dan simulasi lab lengkap dari nol sampai VM bisa diakses.

OpenStack adalah project IaaS (Infrastructure as a Service) open source yang awalnya dikembangkan bersama oleh Rackspace dan NASA. Saat ini dipakai oleh CERN, Walmart, BMW, dan ratusan organisasi lain untuk membangun private cloud mereka sendiri.

Perbedaan mendasar dengan cloud publik seperti AWS atau GCP: infrastrukturnya jalan di hardware sendiri, dikelola sendiri, dan datanya tidak keluar dari datacenter sendiri.

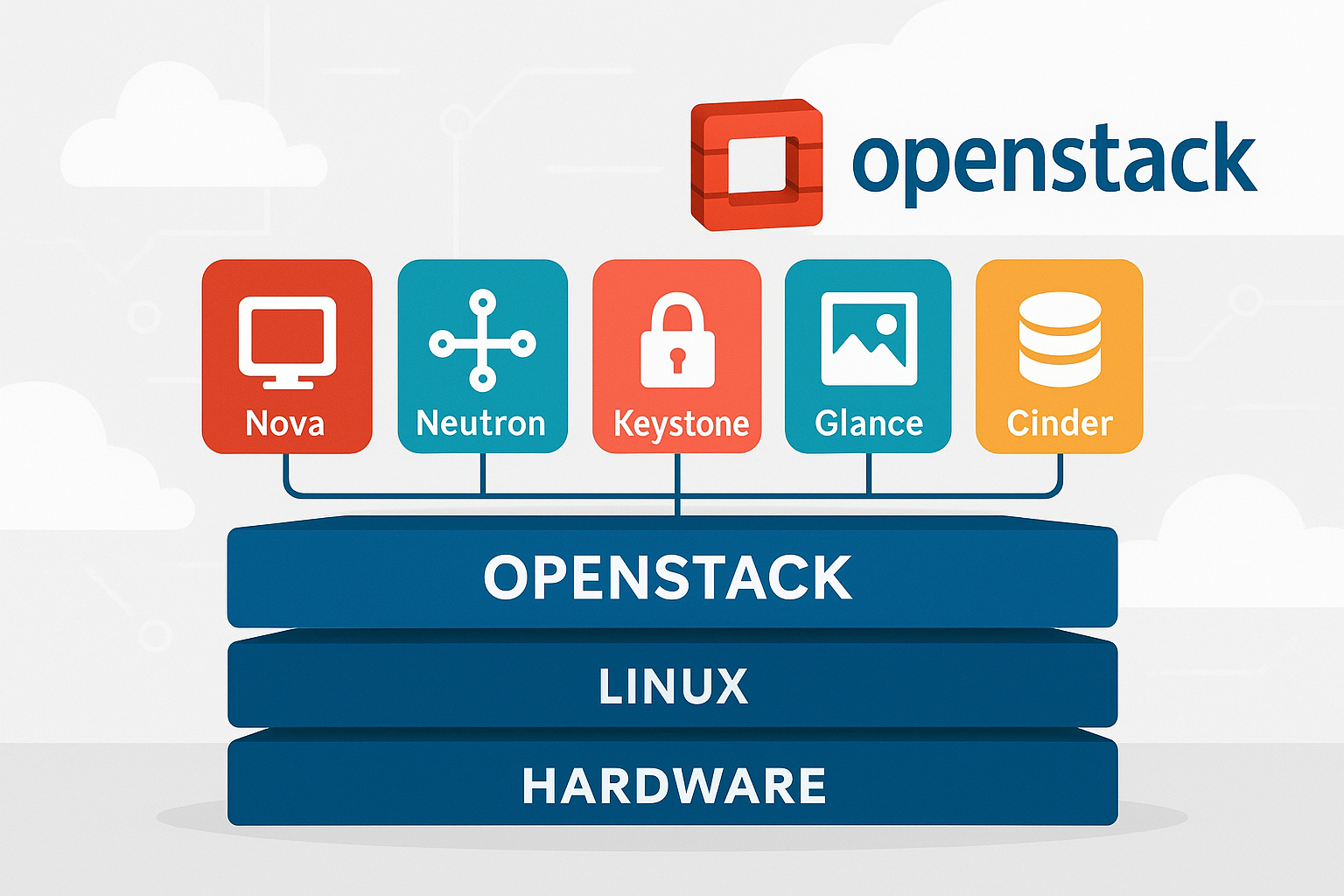

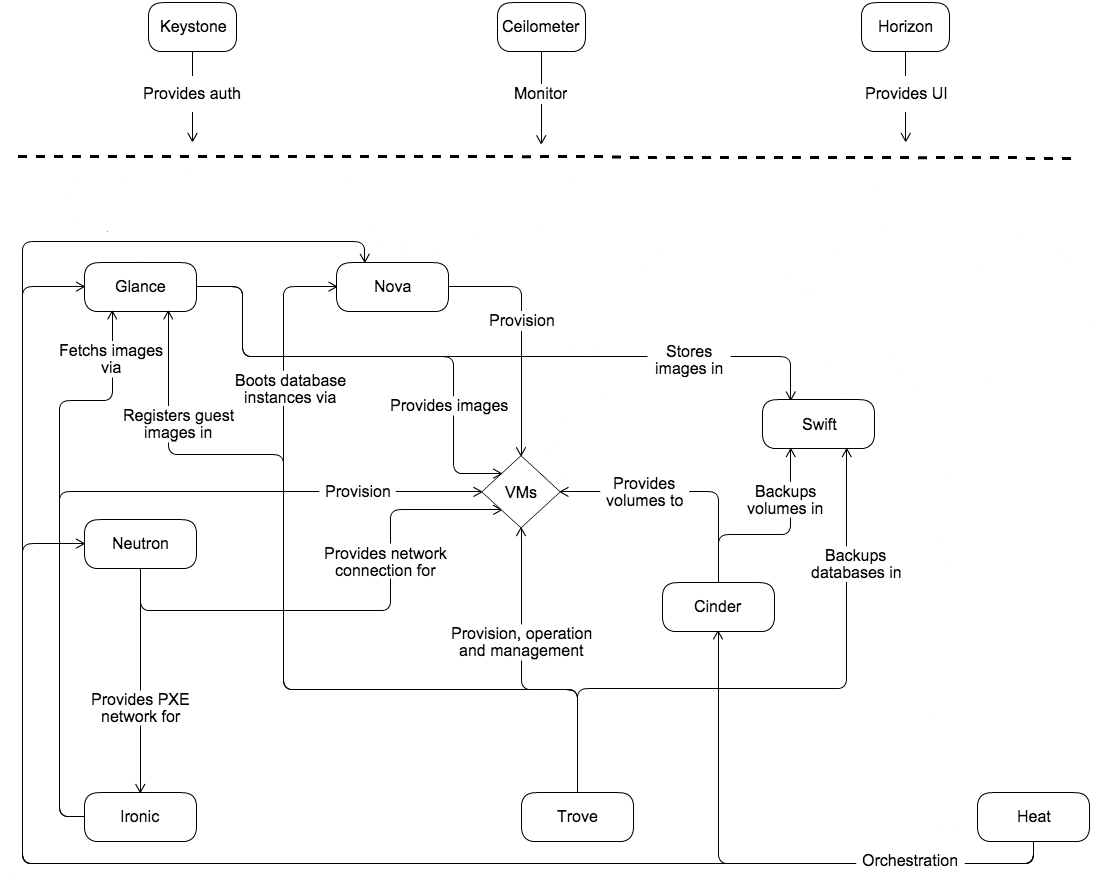

1. Arsitektur OpenStack

OpenStack bukan satu aplikasi. Ini adalah kumpulan service yang masing-masing punya peran spesifik dan berkomunikasi satu sama lain lewat REST API serta message queue (RabbitMQ).

Daftar service inti:

| Service | Code Name | Fungsi |

|---|---|---|

| Identity | Keystone | Authentication dan authorization untuk semua service |

| Compute | Nova | Membuat dan mengelola virtual machine |

| Networking | Neutron | Virtual network, router, floating IP, security group |

| Image | Glance | Menyimpan dan mendistribusikan disk image (template OS) |

| Block Storage | Cinder | Persistent volume yang bisa di-attach ke VM |

| Object Storage | Swift | Menyimpan file/blob dalam skala besar |

| Dashboard | Horizon | Web UI untuk semua service |

| Orchestration | Heat | Deploy infrastruktur via template YAML |

| Telemetry | Ceilometer | Monitoring dan metering resource |

| Placement | Placement | Tracking resource inventory dan usage |

Alur Request "Buat VM"

Ini penting dipahami sebelum masuk ke lab, supaya jelas kenapa ada banyak service yang terlibat dalam satu aksi sederhana:

User: openstack server create ...

│

▼

[1] Keystone → validasi token, cek permission di project

│

▼

[2] Nova API → terima request, kirim ke scheduler via RabbitMQ

│

▼

[3] Nova Scheduler → pilih compute node berdasarkan filter dan weight

│

├──► [4] Glance → download disk image ke compute node

│

├──► [5] Neutron → buat port, assign IP, konfigurasi OVS

│

├──► [6] Cinder → attach volume jika diminta

│

└──► [7] Nova Compute → spawn VM via libvirt/KVM di compute node

│

└──► VM running, IP assigned

Semua koordinasi antar service lewat RabbitMQ (async) dan langsung via HTTP (sync tergantung flow).

2. Model Deployment

Ada beberapa cara deploy OpenStack tergantung kebutuhan dan kapasitas hardware.

2.1 All-in-One (DevStack)

Semua service jalan di satu server. Hanya cocok untuk development dan belajar, bukan produksi.

[ Single Server ]

- Controller services (Keystone, Nova API, Neutron Server, Glance)

- Compute (Nova Compute + KVM)

- Storage (Cinder, Swift)

- Network (Neutron + OVS)

- Database (MariaDB)

- Message Queue (RabbitMQ)

Batas: kalau server mati, seluruh cloud mati.

2.2 Multi-Node (Standar Produksi)

Service dipecah ke beberapa server sesuai peran:

┌─────────────────────────────┐

│ Controller Node │

│ Keystone, Nova API, │

│ Neutron Server, Glance, │

│ Horizon, MariaDB, │

│ RabbitMQ, Memcached │

└──────────────┬──────────────┘

│ Management Network

┌────────────────────────┼────────────────────────┐

│ │ │

┌─────────▼────────┐ ┌──────────▼───────┐ ┌────────────▼────────┐

│ Compute Node 1 │ │ Compute Node 2 │ │ Storage Node │

│ Nova Compute │ │ Nova Compute │ │ Cinder Volume │

│ Neutron Agent │ │ Neutron Agent │ │ Swift Storage │

│ KVM/QEMU │ │ KVM/QEMU │ │ Ceph (opsional) │

└──────────────────┘ └──────────────────┘ └─────────────────────┘

Keunggulan: controller bisa di-HA, compute node bisa ditambah horizontal.

2.3 High Availability (HA)

Controller di-cluster minimal 3 node (odd number), database pakai Galera cluster, RabbitMQ cluster, dan load balancer (HAProxy) di depan.

┌──────────────────────┐

│ Load Balancer │

│ (HAProxy/VIP) │

└──────┬───────┬───────┘

│ │

┌───────────▼──┐ ┌──▼──────────┐ ┌─────────────┐

│ Controller 1 │ │ Controller 2 │ │ Controller 3│

│ (Active) │ │ (Active) │ │ (Active) │

└──────────────┘ └──────────────┘ └─────────────┘

│ │ │

┌──────┴─────────────────┴────────────────┴──────┐

│ Galera / MariaDB Cluster │

└─────────────────────────────────────────────────┘

2.4 Metode Instalasi

| Metode | Cocok Untuk | Kompleksitas |

|---|---|---|

| DevStack | Belajar, development | Rendah |

| OpenStack-Ansible | Produksi, otomasi | Menengah-Tinggi |

| Kolla-Ansible | Produksi, containerized | Menengah-Tinggi |

| Manual (paket distro) | Belajar arsitektur | Tinggi |

| MicroStack / Snap | Workstation, demo | Rendah |

3. Lab: Instalasi DevStack (All-in-One)

Spesifikasi yang Dibutuhkan

| Resource | Minimum | Agar nyaman |

|---|---|---|

| CPU | 4 core, VT-x/AMD-V aktif | 8 core |

| RAM | 8 GB | 16 GB |

| Disk | 50 GB | 100 GB SSD |

| OS | Ubuntu 22.04 LTS | Ubuntu 22.04 LTS |

| Network | 1 NIC dengan internet | 2 NIC |

Bisa pakai VirtualBox, VMware, atau VPS (Hetzner CX31 atau setara).

Langkah Instalasi

# Pastikan OS up to date

sudo apt-get update && sudo apt-get upgrade -y

# Buat user stack — DevStack tidak boleh dijalankan sebagai root

sudo useradd -s /bin/bash -d /opt/stack -m stack

sudo chmod +x /opt/stack

echo "stack ALL=(ALL) NOPASSWD: ALL" | sudo tee /etc/sudoers.d/stack

sudo chmod 0440 /etc/sudoers.d/stack

# Switch ke user stack

sudo -u stack -i

# Clone repository DevStack

git clone https://opendev.org/openstack/devstack /opt/stack/devstack

cd /opt/stack/devstack

# Cek IP server

ip addr show | grep "inet " | grep -v 127.0.0.1

# Output contoh:

# inet 192.168.1.100/24 brd 192.168.1.255 scope global eth0

Buat file konfigurasi local.conf:

cat > /opt/stack/devstack/local.conf << 'EOF'

[[local|localrc]]

# Password — ganti untuk lingkungan nyata

ADMIN_PASSWORD=Secret1234!

DATABASE_PASSWORD=$ADMIN_PASSWORD

RABBIT_PASSWORD=$ADMIN_PASSWORD

SERVICE_PASSWORD=$ADMIN_PASSWORD

# IP server ini

HOST_IP=192.168.1.100

# Logging

LOGFILE=/opt/stack/logs/stack.sh.log

LOGDAYS=1

# Services yang diaktifkan

ENABLED_SERVICES=rabbit,mysql,key

# Nova (Compute)

ENABLED_SERVICES+=,n-api,n-cpu,n-cond,n-sch,n-novnc,n-api-meta

ENABLED_SERVICES+=,placement-api

# Glance (Image)

ENABLED_SERVICES+=,g-api

# Neutron (Networking) — OVN backend

ENABLED_SERVICES+=,neutron,q-svc

Q_AGENT=ovn

Q_ML2_PLUGIN_MECHANISM_DRIVERS=ovn,logger

Q_ML2_TENANT_NETWORK_TYPE=geneve

Q_USE_PROVIDERNET_FOR_PUBLIC=True

# Cinder (Block Storage)

ENABLED_SERVICES+=,cinder,c-api,c-vol,c-sch

# Horizon (Dashboard)

ENABLED_SERVICES+=,horizon

# Nonaktifkan tempest jika RAM terbatas

# ENABLED_SERVICES+=,tempest

EOF

Jalankan instalasi:

cd /opt/stack/devstack

./stack.sh

Proses ini memakan waktu 20-40 menit tergantung kecepatan internet dan hardware. Output real-time:

[Call Trace]

./stack.sh:269:main

./stack.sh:253:run_phase

./stack.sh:220:install_keystone

...

+++ [[ "keystone" =~ ^keystone ]] && keystone_configure

...

+++ install_nova

+++ install_nova_compute

...

+++ [[ True == \T\r\u\e ]] && install_neutron

+++ configure_neutron

...

+++ install_cinder

...

Horizon is now available at http://192.168.1.100/dashboard

Keystone is serving at http://192.168.1.100/identity/

The default users are: admin and demo

The password: Secret1234!

Services:

+---------------------+-----------------------------+

| Service | Status |

+---------------------+-----------------------------+

| devstack@keystone | active (running) |

| devstack@n-api | active (running) |

| devstack@n-cpu | active (running) |

| devstack@n-sch | active (running) |

| devstack@n-cond | active (running) |

| devstack@g-api | active (running) |

| devstack@q-svc | active (running) |

| devstack@c-api | active (running) |

| devstack@c-vol | active (running) |

| devstack@horizon | active (running) |

+---------------------+-----------------------------+

stack.sh completed in 1842 seconds.

Kalau ada error saat stack.sh, baca log:

tail -100 /opt/stack/logs/stack.sh.log

# atau cari baris ERROR:

grep -i "error\|fail" /opt/stack/logs/stack.sh.log | tail -20

Setup OpenStack CLI

# Load credential admin

source /opt/stack/devstack/openrc admin admin

# Atau buat RC file manual

cat > ~/adminrc << 'EOF'

export OS_PROJECT_DOMAIN_NAME=Default

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_NAME=admin

export OS_USERNAME=admin

export OS_PASSWORD=Secret1234!

export OS_AUTH_URL=http://192.168.1.100/identity

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

EOF

source ~/adminrc

# Verifikasi koneksi ke Keystone

openstack token issue

Output openstack token issue:

+------------+---------------------------------------------------------+

| Field | Value |

+------------+---------------------------------------------------------+

| expires | 2026-03-10T15:00:00+0000 |

| id | gAAAAABmXZ8k2RtKp8LuEsbn9mX3....(token panjang) |

| project_id | a1b2c3d4e5f6789012345678abcdef00 |

| user_id | 9876543210abcdef12345678fedcba09 |

+------------+---------------------------------------------------------+

Kalau muncul output seperti ini, Keystone berjalan dan credential valid.

2.5 Lab: Instalasi Multi-Node Manual (Controller + Compute)

Ini adalah cara yang lebih dekat ke deployment produksi sebenarnya. Dua server dibutuhkan:

| Server | Peran | IP |

|---|---|---|

| controller | Keystone, Nova API, Neutron, Glance, Horizon | 10.0.0.11 |

| compute1 | Nova Compute, Neutron Agent | 10.0.0.31 |

Persiapan Kedua Node

Lakukan di kedua server:

# Set hostname — sesuaikan per node

# Di controller:

sudo hostnamectl set-hostname controller

# Di compute1:

sudo hostnamectl set-hostname compute1

# Edit /etc/hosts di kedua node

sudo tee -a /etc/hosts << 'EOF'

10.0.0.11 controller

10.0.0.31 compute1

EOF

# Update dan install paket dasar

sudo apt-get update && sudo apt-get upgrade -y

sudo apt-get install -y chrony python3-openstackclient

# Konfigurasi chrony (NTP) — penting untuk OpenStack

# Di controller, tambahkan:

echo "allow 10.0.0.0/24" | sudo tee -a /etc/chrony/chrony.conf

sudo systemctl restart chrony

# Di compute1, sync ke controller:

echo "server controller iburst" | sudo tee -a /etc/chrony/chrony.conf

sudo systemctl restart chrony

# Verifikasi sinkronisasi NTP

chronyc sources

# Output:

# MS Name/IP address Stratum Poll Reach LastRx Last sample

# ===============================================================================

# ^* controller 3 6 17 10 +15us[ +18us] +/- 242ms

Install MariaDB dan RabbitMQ (di Controller)

# Install MariaDB

sudo apt-get install -y mariadb-server python3-pymysql

# Konfigurasi MariaDB untuk OpenStack

sudo tee /etc/mysql/mariadb.conf.d/99-openstack.cnf << 'EOF'

[mysqld]

bind-address = 10.0.0.11

default-storage-engine = innodb

innodb_file_per_table = on

max_connections = 4096

collation-server = utf8_general_ci

character-set-server = utf8

EOF

sudo systemctl restart mariadb

sudo systemctl enable mariadb

# Secure installation

sudo mysql_secure_installation

# Jawab:

# Enter current password for root: (kosong, tekan Enter)

# Set root password? Y → masukkan password baru

# Remove anonymous users? Y

# Disallow root login remotely? Y

# Remove test database? Y

# Reload privilege tables? Y

# Install RabbitMQ

sudo apt-get install -y rabbitmq-server

# Buat user openstack di RabbitMQ

sudo rabbitmqctl add_user openstack Secret1234!

sudo rabbitmqctl set_permissions openstack ".*" ".*" ".*"

# Output:

# Adding user "openstack" ...

# Setting permissions for user "openstack" in vhost "/" ...

# Install Memcached

sudo apt-get install -y memcached python3-memcache

# Konfigurasi Memcached agar listen ke IP controller

sudo sed -i 's/-l 127.0.0.1/-l 10.0.0.11/' /etc/memcached.conf

sudo systemctl restart memcached

sudo systemctl enable memcached

# Install etcd

sudo apt-get install -y etcd

sudo tee /etc/default/etcd << 'EOF'

ETCD_NAME="controller"

ETCD_DATA_DIR="/var/lib/etcd"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-01"

ETCD_INITIAL_CLUSTER="controller=http://10.0.0.11:2380"

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://10.0.0.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://10.0.0.11:2379"

ETCD_LISTEN_PEER_URLS="http://0.0.0.0:2380"

ETCD_LISTEN_CLIENT_URLS="http://10.0.0.11:2379"

EOF

sudo systemctl enable --now etcd

Install Keystone (di Controller)

# Buat database Keystone

sudo mysql -u root -p << 'EOF'

CREATE DATABASE keystone;

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'Secret1234!';

EOF

# Install paket Keystone

sudo apt-get install -y keystone apache2 libapache2-mod-wsgi-py3

# Edit konfigurasi Keystone

sudo tee /etc/keystone/keystone.conf << 'EOF'

[DEFAULT]

log_dir = /var/log/keystone

[database]

connection = mysql+pymysql://keystone:Secret1234!@controller/keystone

[token]

provider = fernet

EOF

# Populate database Keystone

sudo -u keystone keystone-manage db_sync

# Init Fernet keys

sudo keystone-manage fernet_setup \

--keystone-user keystone \

--keystone-group keystone

sudo keystone-manage credential_setup \

--keystone-user keystone \

--keystone-group keystone

# Bootstrap Identity service

sudo keystone-manage bootstrap \

--bootstrap-password Secret1234! \

--bootstrap-admin-url http://controller:5000/v3/ \

--bootstrap-internal-url http://controller:5000/v3/ \

--bootstrap-public-url http://controller:5000/v3/ \

--bootstrap-region-id RegionOne

# Konfigurasi Apache2

echo "ServerName controller" | sudo tee -a /etc/apache2/apache2.conf

sudo systemctl enable --now apache2

# Verifikasi Keystone

export OS_USERNAME=admin

export OS_PASSWORD=Secret1234!

export OS_PROJECT_NAME=admin

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_DOMAIN_NAME=Default

export OS_AUTH_URL=http://controller:5000/v3

export OS_IDENTITY_API_VERSION=3

openstack token issue

# output token seperti di atas → Keystone selesai

Install Glance (di Controller)

# Buat database

sudo mysql -u root -p << 'EOF'

CREATE DATABASE glance;

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY 'Secret1234!';

EOF

# Buat user dan endpoint Glance di Keystone

openstack user create --domain default --password Secret1234! glance

openstack role add --project service --user glance admin

openstack service create --name glance --description "OpenStack Image" image

openstack endpoint create --region RegionOne image public http://controller:9292

openstack endpoint create --region RegionOne image internal http://controller:9292

openstack endpoint create --region RegionOne image admin http://controller:9292

# Output contoh endpoint create:

# +--------------+----------------------------------+

# | Field | Value |

# +--------------+----------------------------------+

# | enabled | True |

# | id | 3c1e5aba-5a3e-4c11-bcba-... |

# | interface | public |

# | region | RegionOne |

# | service_id | 8c2faff7-... |

# | service_name | glance |

# | service_type | image |

# | url | http://controller:9292 |

# +--------------+----------------------------------+

# Install Glance

sudo apt-get install -y glance

# Konfigurasi

sudo tee /etc/glance/glance-api.conf << 'EOF'

[DEFAULT]

[database]

connection = mysql+pymysql://glance:Secret1234!@controller/glance

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = Secret1234!

[paste_deploy]

flavor = keystone

[glance_store]

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/

EOF

# Populate database

sudo -u glance glance-manage db_sync

# Start Glance

sudo systemctl enable --now glance-api

# Verifikasi

openstack image list

# Output: (kosong, belum ada image — normal)

# +----+------+--------+

# | ID | Name | Status |

# +----+------+--------+

# +----+------+--------+

Install Nova (di Controller)

# Buat database

sudo mysql -u root -p << 'EOF'

CREATE DATABASE nova_api;

CREATE DATABASE nova;

CREATE DATABASE nova_cell0;

GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY 'Secret1234!';

EOF

# Buat user, service, endpoint di Keystone

openstack user create --domain default --password Secret1234! nova

openstack role add --project service --user nova admin

openstack service create --name nova --description "OpenStack Compute" compute

openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1

openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1

openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1

# Buat user placement

openstack user create --domain default --password Secret1234! placement

openstack role add --project service --user placement admin

openstack service create --name placement --description "Placement API" placement

openstack endpoint create --region RegionOne placement public http://controller:8778

openstack endpoint create --region RegionOne placement internal http://controller:8778

openstack endpoint create --region RegionOne placement admin http://controller:8778

# Install paket

sudo apt-get install -y nova-api nova-conductor nova-novncproxy \

nova-scheduler nova-placement-api placement-api

# Konfigurasi /etc/nova/nova.conf (ringkasan bagian terpenting)

sudo tee /etc/nova/nova.conf << 'EOF'

[DEFAULT]

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:Secret1234!@controller:5672/

my_ip = 10.0.0.11

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api_database]

connection = mysql+pymysql://nova:Secret1234!@controller/nova_api

[database]

connection = mysql+pymysql://nova:Secret1234!@controller/nova

[api]

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000/

auth_url = http://controller:5000/

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = Secret1234!

[neutron]

auth_url = http://controller:5000

auth_type = password

project_domain_name = Default

user_domain_name = Default

region_name = RegionOne

project_name = service

username = neutron

password = Secret1234!

service_metadata_proxy = true

metadata_proxy_shared_secret = MetaSecret123

[vnc]

enabled = true

server_listen = $my_ip

server_proxyclient_address = $my_ip

[glance]

api_servers = http://controller:9292

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = Secret1234!

EOF

# Populate database

sudo nova-manage api_db sync

sudo nova-manage cell_v2 map_cell0

sudo nova-manage cell_v2 create_cell --name=cell1 --verbose

sudo nova-manage db sync

# Verifikasi cell

sudo nova-manage cell_v2 list_cells

# Output:

# +-------+--------------------------------------+----------------------------------------+

# | Name | UUID | Transport URL |

# +-------+--------------------------------------+----------------------------------------+

# | cell0 | 00000000-0000-0000-0000-000000000000 | none:/ |

# | cell1 | 0a253206-... | rabbit://openstack:****@controller/ |

# +-------+--------------------------------------+----------------------------------------+

# Start semua service Nova di controller

sudo systemctl enable --now \

nova-api nova-scheduler nova-conductor nova-novncproxy

# Verifikasi

openstack compute service list

# Output:

# +----+----------------+------------+----------+---------+-------+

# | ID | Binary | Host | Zone | Status | State |

# +----+----------------+------------+----------+---------+-------+

# | 1 | nova-conductor | controller | internal | enabled | up |

# | 2 | nova-scheduler | controller | internal | enabled | up |

# +----+----------------+------------+----------+---------+-------+

Install Nova Compute (di compute1)

# Lakukan di server compute1

sudo apt-get install -y nova-compute

# Cek apakah hardware virtualization tersedia

egrep -c '(vmx|svm)' /proc/cpuinfo

# Kalau hasilnya > 0, bisa pakai KVM (kvm)

# Kalau 0 (nested VM/VPS), harus pakai QEMU (qemu)

# Konfigurasi nova.conf di compute1

sudo tee /etc/nova/nova.conf << 'EOF'

[DEFAULT]

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:Secret1234!@controller:5672/

my_ip = 10.0.0.31

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api]

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000/

auth_url = http://controller:5000/

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = Secret1234!

[vnc]

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://controller:6080/vnc_auto.html

[glance]

api_servers = http://controller:9292

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = Secret1234!

[libvirt]

# Kalau CPU support VT-x/SVM:

virt_type = kvm

# Kalau tidak (VPS/nested):

# virt_type = qemu

EOF

sudo systemctl enable --now nova-compute

# Kembali ke controller: discover compute node baru

sudo nova-manage cell_v2 discover_hosts --verbose

# Output:

# Found 2 cell mappings.

# Skipping cell0 since it does not contain hosts.

# Getting computes from cell 'cell1': 0a253206-...

# Checking host mapping for compute host 'compute1': ...

# Creating host mapping for compute host 'compute1': ...

# Found 1 unmapped computes in cell: 0a253206-...

# Verifikasi compute node terdaftar

openstack compute service list

# Output:

# +----+----------------+------------+------+---------+-------+

# | ID | Binary | Host | Zone | Status | State |

# +----+----------------+------------+------+---------+-------+

# | 1 | nova-conductor | controller | int | enabled | up |

# | 2 | nova-scheduler | controller | int | enabled | up |

# | 3 | nova-compute | compute1 | nova | enabled | up | ← ini

# +----+----------------+------------+------+---------+-------+

Install Neutron (Controller dan Compute1)

# ======= Di Controller =======

# Buat database

sudo mysql -u root -p << 'EOF'

CREATE DATABASE neutron;

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY 'Secret1234!';

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY 'Secret1234!';

EOF

# User dan endpoint

openstack user create --domain default --password Secret1234! neutron

openstack role add --project service --user neutron admin

openstack service create --name neutron --description "OpenStack Networking" network

openstack endpoint create --region RegionOne network public http://controller:9696

openstack endpoint create --region RegionOne network internal http://controller:9696

openstack endpoint create --region RegionOne network admin http://controller:9696

# Install Neutron dengan ML2 plugin dan LinuxBridge agent

sudo apt-get install -y neutron-server neutron-plugin-ml2 \

neutron-linuxbridge-agent neutron-l3-agent neutron-dhcp-agent \

neutron-metadata-agent

# Konfigurasi /etc/neutron/neutron.conf

sudo tee /etc/neutron/neutron.conf << 'EOF'

[DEFAULT]

core_plugin = ml2

service_plugins = router

transport_url = rabbit://openstack:Secret1234!@controller

auth_strategy = keystone

notify_nova_on_port_status_changes = true

notify_nova_on_port_data_changes = true

[database]

connection = mysql+pymysql://neutron:Secret1234!@controller/neutron

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = neutron

password = Secret1234!

[nova]

auth_url = http://controller:5000

auth_type = password

project_domain_name = Default

user_domain_name = Default

region_name = RegionOne

project_name = service

username = nova

password = Secret1234!

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

EOF

# Konfigurasi ML2 plugin

sudo tee /etc/neutron/plugins/ml2/ml2_conf.ini << 'EOF'

[ml2]

type_drivers = flat,vlan,vxlan

tenant_network_types = vxlan

mechanism_drivers = linuxbridge,l2population

extension_drivers = port_security

[ml2_type_flat]

flat_networks = provider

[ml2_type_vxlan]

vni_ranges = 1:1000

[securitygroup]

enable_ipset = true

EOF

# Konfigurasi LinuxBridge agent

PROVIDER_IFACE=eth0 # interface yang terhubung ke external network

sudo tee /etc/neutron/plugins/ml2/linuxbridge_agent.ini << EOF

[linux_bridge]

physical_interface_mappings = provider:${PROVIDER_IFACE}

[vxlan]

enable_vxlan = true

local_ip = 10.0.0.11

l2_population = true

[securitygroup]

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

EOF

# Konfigurasi L3 agent

sudo tee /etc/neutron/l3_agent.ini << 'EOF'

[DEFAULT]

interface_driver = linuxbridge

EOF

# Konfigurasi DHCP agent

sudo tee /etc/neutron/dhcp_agent.ini << 'EOF'

[DEFAULT]

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

EOF

# Konfigurasi metadata agent

sudo tee /etc/neutron/metadata_agent.ini << 'EOF'

[DEFAULT]

nova_metadata_host = controller

metadata_proxy_shared_secret = MetaSecret123

EOF

# Populate database Neutron

sudo neutron-db-manage --config-file /etc/neutron/neutron.conf \

--config-file /etc/neutron/plugins/ml2/ml2_conf.ini \

upgrade head

# Restart Nova API agar nova-neutron integration aktif

sudo systemctl restart nova-api

# Start semua service Neutron di controller

sudo systemctl enable --now \

neutron-server neutron-linuxbridge-agent \

neutron-dhcp-agent neutron-metadata-agent neutron-l3-agent

# Verifikasi

openstack network agent list

# Output:

# +------+--------------------+------------+-------------------+-------+-------+

# | ID | Agent Type | Host | Availability Zone | Alive | State |

# +------+--------------------+------------+-------------------+-------+-------+

# | ... | Linux bridge agent | controller | None | True | UP |

# | ... | DHCP agent | controller | nova | True | UP |

# | ... | L3 agent | controller | nova | True | UP |

# | ... | Metadata agent | controller | None | True | UP |

# +------+--------------------+------------+-------------------+-------+-------+

# ======= Di Compute1 =======

sudo apt-get install -y neutron-linuxbridge-agent

PROVIDER_IFACE=eth0

sudo tee /etc/neutron/neutron.conf << 'EOF'

[DEFAULT]

transport_url = rabbit://openstack:Secret1234!@controller

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = neutron

password = Secret1234!

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

EOF

sudo tee /etc/neutron/plugins/ml2/linuxbridge_agent.ini << EOF

[linux_bridge]

physical_interface_mappings = provider:${PROVIDER_IFACE}

[vxlan]

enable_vxlan = true

local_ip = 10.0.0.31

l2_population = true

[securitygroup]

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

EOF

sudo systemctl enable --now neutron-linuxbridge-agent

# Kembali ke controller, cek agent compute1 sudah muncul

openstack network agent list | grep compute1

# +------+--------------------+----------+------+-------+-------+

# | ... | Linux bridge agent | compute1 | None | True | UP |

# +------+--------------------+----------+------+-------+-------+

4. Lab: Manajemen Identity (Keystone)

# Lihat semua project

openstack project list

# Output:

# +----------------------------------+---------+

# | ID | Name |

# +----------------------------------+---------+

# | a1b2c3d4e5f678901234567890abcdef | admin |

# | b2c3d4e5f678901234567890abcdef01 | demo |

# | c3d4e5f678901234567890abcdef0123 | service |

# +----------------------------------+---------+

# Buat project baru

openstack project create \

--domain Default \

--description "Development Team" \

dev-team

# Buat user baru

openstack user create \

--domain Default \

--password "DevPass123!" \

ali-dev

# Assign role

openstack role add --project dev-team --user ali-dev member

# Cek role assignment

openstack role assignment list --user ali-dev --project dev-team --names

# +--------+---------+-------+----------+--------+-----------+

# | Role | User | Group | Project | Domain | Inherited |

# +--------+---------+-------+----------+--------+-----------+

# | member | ali-dev | | dev-team | | False |

# +--------+---------+-------+----------+--------+-----------+

5. Lab: Image Management (Glance)

# Download Ubuntu 22.04 cloud image

wget https://cloud-images.ubuntu.com/jammy/current/jammy-server-cloudimg-amd64.img \

-O /tmp/ubuntu-22.04.img

# Upload ke Glance

openstack image create \

--disk-format qcow2 \

--container-format bare \

--file /tmp/ubuntu-22.04.img \

--public \

--min-ram 512 \

--min-disk 5 \

"Ubuntu 22.04 LTS"

# Output:

# +------------------+----------------------------------------------+

# | Field | Value |

# +------------------+----------------------------------------------+

# | container_format | bare |

# | disk_format | qcow2 |

# | id | f1e2d3c4-b5a6-7890-abcd-ef0123456789 |

# | min_disk | 5 |

# | min_ram | 512 |

# | name | Ubuntu 22.04 LTS |

# | size | 676495360 |

# | status | active |

# | visibility | public |

# +------------------+----------------------------------------------+

# List image

openstack image list

# +--------------------------------------+------------------+--------+

# | ID | Name | Status |

# +--------------------------------------+------------------+--------+

# | f1e2d3c4-b5a6-7890-abcd-ef0123456789 | Ubuntu 22.04 LTS | active |

# | a2b3c4d5-e6f7-8901-bcde-f01234567890 | CirrOS 0.6.1 | active |

# +--------------------------------------+------------------+--------+

6. Lab: Buat VM (Nova + Neutron)

# Buat keypair dari public key yang sudah ada

openstack keypair create --public-key ~/.ssh/id_ed25519.pub my-key

# Buat internal network

openstack network create --provider-network-type vxlan internal-net

openstack subnet create \

--network internal-net \

--subnet-range 10.10.0.0/24 \

--gateway 10.10.0.1 \

--dns-nameserver 8.8.8.8 \

internal-subnet

# Buat router dan hubungkan ke external + internal

openstack router create internal-router

openstack router set --external-gateway public internal-router

openstack router add subnet internal-router internal-subnet

# Security group untuk SSH

openstack security group create --description "Allow SSH" dev-sg

openstack security group rule create --protocol tcp --dst-port 22 --remote-ip 0.0.0.0/0 dev-sg

openstack security group rule create --protocol icmp dev-sg

# Buat VM

openstack server create \

--flavor m1.small \

--image "Ubuntu 22.04 LTS" \

--key-name my-key \

--network internal-net \

--security-group dev-sg \

my-vm-01

# Monitor sampai status ACTIVE

watch -n 2 "openstack server show my-vm-01 | grep -E 'status|addresses|task_state'"

# Output saat build:

# | OS-EXT-STS:task_state | spawning |

# | OS-EXT-STS:vm_state | building |

# | status | BUILD |

# Output saat selesai:

# | OS-EXT-STS:task_state | None |

# | OS-EXT-STS:vm_state | active |

# | addresses | internal-net=10.10.0.5 |

# | status | ACTIVE |

# Assign floating IP

openstack floating ip create public

# | floating_ip_address | 203.0.113.10 |

openstack server add floating ip my-vm-01 203.0.113.10

# SSH ke VM

ssh ubuntu@203.0.113.10 -i ~/.ssh/id_ed25519

# ubuntu@my-vm-01:~$

7. Lab: Block Storage (Cinder)

# Buat volume

openstack volume create --size 20 my-data-vol

# Attach ke VM

openstack server add volume --device /dev/vdb my-vm-01 my-data-vol

# Di dalam VM:

sudo mkfs.ext4 /dev/vdb

sudo mkdir /mnt/data

sudo mount /dev/vdb /mnt/data

df -h /mnt/data

# Filesystem Size Used Avail Use% Mounted on

# /dev/vdb 20G 44M 19G 1% /mnt/data

# Mount otomatis saat reboot

echo "/dev/vdb /mnt/data ext4 defaults 0 0" | sudo tee -a /etc/fstab

8. Orchestration dengan Heat

Heat memungkinkan deploy seluruh infrastruktur dari satu file template (HOT — Heat Orchestration Template).

# web-stack.yaml

heat_template_version: 2018-08-31

parameters:

key_name:

type: string

default: my-key

image:

type: string

default: "Ubuntu 22.04 LTS"

flavor:

type: string

default: m1.small

resources:

web_sg:

type: OS::Neutron::SecurityGroup

properties:

rules:

- protocol: tcp

port_range_min: 22

port_range_max: 22

- protocol: tcp

port_range_min: 80

port_range_max: 80

- protocol: icmp

private_net:

type: OS::Neutron::Net

private_subnet:

type: OS::Neutron::Subnet

properties:

network: { get_resource: private_net }

cidr: 192.168.100.0/24

dns_nameservers: [8.8.8.8]

router:

type: OS::Neutron::Router

properties:

external_gateway_info: { network: public }

router_iface:

type: OS::Neutron::RouterInterface

properties:

router_id: { get_resource: router }

subnet: { get_resource: private_subnet }

server:

type: OS::Nova::Server

properties:

image: { get_param: image }

flavor: { get_param: flavor }

key_name: { get_param: key_name }

networks:

- network: { get_resource: private_net }

security_groups:

- { get_resource: web_sg }

user_data: |

#!/bin/bash

apt-get update -y && apt-get install -y nginx

systemctl enable --now nginx

fip:

type: OS::Neutron::FloatingIP

properties:

floating_network: public

fip_assoc:

type: OS::Neutron::FloatingIPAssociation

properties:

floatingip_id: { get_resource: fip }

port_id: { get_attr: [server, addresses, { get_resource: private_net }, 0, port] }

outputs:

server_ip:

value: { get_attr: [fip, floating_ip_address] }

# Deploy stack

openstack stack create --template web-stack.yaml web-stack-01

# Monitor

openstack stack event list --follow web-stack-01

# 2026-03-10 08:01:47 web-stack-01 CREATE_COMPLETE Stack successfully created

# Ambil output IP

openstack stack output show web-stack-01 server_ip

# | output_value | 203.0.113.25 |

curl http://203.0.113.25

# Welcome to nginx! (atau konten default nginx)

# Hapus stack (semua resource ikut dihapus)

openstack stack delete --wait web-stack-01

9. Object Storage (Swift)

Swift menyimpan data sebagai object — file + metadata. Berbeda dengan block storage (Cinder) yang butuh attach ke VM, Swift bisa diakses langsung via REST API dari mana saja. Cocok untuk backup, media file, log archive, dan static content.

Arsitektur Swift

┌──────────────────┐

│ Swift Proxy │ ← menerima request HTTP

└────────┬─────────┘

│

┌────────────────┼────────────────┐

│ │ │

┌──────▼──────┐ ┌──────▼──────┐ ┌──────▼──────┐

│ Storage │ │ Storage │ │ Storage │

│ Node 1 │ │ Node 2 │ │ Node 3 │

│ zone-1 │ │ zone-2 │ │ zone-3 │

└─────────────┘ └─────────────┘ └─────────────┘

Swift menyimpan minimal 3 replica dari setiap object (configurable). Kalau satu storage node mati, data tetap bisa diakses dari replica lainnya.

Konsep Dasar Swift

| Konsep | Penjelasan |

|---|---|

| Account | Top level namespace, biasanya satu per project/tenant |

| Container | Mirip "folder" atau "bucket" di S3, tempat object disimpan |

| Object | File + metadata (max default 5GB per object, bisa lebih dengan SLO) |

| Ring | Mapping logic yang menentukan object disimpan di storage node mana |

| Replica | Jumlah copy data (default 3), disebar ke zone berbeda |

| Zone | Isolasi failure domain — bisa beda rack, beda server, beda datacenter |

Lab: Operasi Object Storage

# Buat container (mirip bucket S3)

openstack container create my-backups

# Output:

# +---------------------------------------+-----------+

# | account | container |

# +---------------------------------------+-----------+

# | AUTH_a1b2c3d4e5f678901234567890abcdef | my-backups|

# +---------------------------------------+-----------+

# Upload file ke container

echo "Ini file backup database" > /tmp/db-backup-20260310.sql

openstack object create my-backups /tmp/db-backup-20260310.sql

# Output:

# +---------------------------------+-----------+----------------------------------+

# | object | container | etag |

# +---------------------------------+-----------+----------------------------------+

# | tmp/db-backup-20260310.sql | my-backups| d41d8cd98f00b204e9800998ecf8427e |

# +---------------------------------+-----------+----------------------------------+

# Upload dengan nama custom

openstack object create my-backups /tmp/db-backup-20260310.sql --name backups/db/2026-03-10.sql

# List object dalam container

openstack object list my-backups

# Output:

# +----------------------------+

# | Name |

# +----------------------------+

# | backups/db/2026-03-10.sql |

# | tmp/db-backup-20260310.sql |

# +----------------------------+

# Download object

openstack object save my-backups backups/db/2026-03-10.sql --file /tmp/restored-backup.sql

cat /tmp/restored-backup.sql

# Ini file backup database

# Lihat metadata object

openstack object show my-backups backups/db/2026-03-10.sql

# Output:

# +----------------+-----------------------------------+

# | Field | Value |

# +----------------+-----------------------------------+

# | account | AUTH_a1b2c3d4e5... |

# | container | my-backups |

# | content-length | 26 |

# | content-type | application/octet-stream |

# | etag | d41d8cd98f00b204e9800998ecf8427e |

# | last-modified | Mon, 10 Mar 2026 08:30:00 GMT |

# | object | backups/db/2026-03-10.sql |

# +----------------+-----------------------------------+

# Set metadata custom ke object

openstack object set my-backups backups/db/2026-03-10.sql --property backup-type=full --property database=production

# Hapus object

openstack object delete my-backups tmp/db-backup-20260310.sql

# Hapus container (harus kosong dulu)

openstack object delete my-backups backups/db/2026-03-10.sql

openstack container delete my-backups

Upload File Besar (> 5GB) dengan SLO

Swift punya limit 5GB per single object upload. Untuk file lebih besar, pakai Static Large Object (SLO) — file dipecah jadi segment, lalu dirangkai kembali saat download.

# Install swift CLI tool tambahan

pip install python-swiftclient

# Upload file besar (misalnya disk image 10GB)

# --segment-size 1073741824 = pecah per 1GB segment

swift upload my-images --segment-size 1073741824 /tmp/large-disk-image.raw

# Output:

# large-disk-image.raw segment 0

# large-disk-image.raw segment 1

# large-disk-image.raw segment 2

# ...

# large-disk-image.raw segment 9

# large-disk-image.raw

# Swift otomatis buat manifest yang menghubungkan semua segment

# Download kembali — muncul sebagai satu file utuh

swift download my-images large-disk-image.raw -o /tmp/downloaded.raw

# Verifikasi integritas

md5sum /tmp/large-disk-image.raw /tmp/downloaded.raw

# hash harus sama

Akses Swift via cURL (REST API)

# Dapatkan token dan storage URL

TOKEN=$(openstack token issue -f value -c id)

SWIFT_URL=$(openstack catalog show object-store -f value -c endpoints | grep public | awk '{print $2}')

# List containers via REST

curl -s -H "X-Auth-Token: $TOKEN" $SWIFT_URL | python3 -m json.tool

# Upload via REST

curl -X PUT -H "X-Auth-Token: $TOKEN" -H "Content-Type: text/plain" -d "Hello from REST API" "$SWIFT_URL/my-backups/hello.txt"

# Download via REST

curl -s -H "X-Auth-Token: $TOKEN" "$SWIFT_URL/my-backups/hello.txt"

# Hello from REST API

10. Cinder Advanced — Snapshot, Backup, Multi-Backend

Volume Snapshot

Snapshot menangkap state volume pada satu titik waktu. Berguna untuk backup sebelum upgrade atau perubahan besar.

# Buat snapshot dari volume yang sudah ada

openstack volume snapshot create --volume my-data-vol --description "Before database upgrade" snap-before-upgrade

# Output:

# +-------------+--------------------------------------+

# | Field | Value |

# +-------------+--------------------------------------+

# | created_at | 2026-03-10T09:00:00.000000 |

# | description | Before database upgrade |

# | id | 55555555-6666-7777-8888-999999999999 |

# | name | snap-before-upgrade |

# | size | 20 |

# | status | available |

# | volume_id | 44444444-5555-6666-7777-888888888888 |

# +-------------+--------------------------------------+

# List semua snapshot

openstack volume snapshot list

# Output:

# +------------------+---------------------+-----------+------+--------+

# | ID | Name | Description | Size | Status |

# +------------------+---------------------+-----------+------+--------+

# | 55555555-6666... | snap-before-upgrade | Before... | 20 | avail |

# +------------------+---------------------+-----------+------+--------+

# Buat volume baru dari snapshot (untuk restore)

openstack volume create --snapshot snap-before-upgrade --size 20 restored-data-vol

# Volume ini bisa di-attach ke VM lain untuk verifikasi data

openstack server add volume --device /dev/vdc my-vm-01 restored-data-vol

Volume Backup ke Swift

Backup menyimpan copy volume ke object storage (Swift), terpisah dari storage pool Cinder. Lebih aman karena beda infrastruktur.

# Backup volume ke Swift

openstack volume backup create --name backup-data-vol-20260310 --description "Daily backup" my-data-vol

# Output:

# +------------+--------------------------------------+

# | Field | Value |

# +------------+--------------------------------------+

# | id | aaaabbbb-cccc-dddd-eeee-ffffffffffff |

# | name | backup-data-vol-20260310 |

# | size | 20 |

# | status | creating |

# | volume_id | 44444444-5555-6666-7777-888888888888 |

# +------------+--------------------------------------+

# Monitor backup progress

openstack volume backup show backup-data-vol-20260310 | grep status

# creating → available (tunggu beberapa menit tergantung ukuran)

# List backups

openstack volume backup list

# +--------------------------------------+---------------------------+--------+------+

# | ID | Name | Status | Size |

# +--------------------------------------+---------------------------+--------+------+

# | aaaabbbb-cccc-dddd-eeee-ffffffffffff | backup-data-vol-20260310 | avail | 20 |

# +--------------------------------------+---------------------------+--------+------+

# Restore backup ke volume baru

openstack volume backup restore backup-data-vol-20260310 restored-from-backup

# Output:

# +-------------+--------------------------------------+

# | Field | Value |

# +-------------+--------------------------------------+

# | backup_id | aaaabbbb-cccc-dddd-eeee-ffffffffffff |

# | volume_id | new-vol-id-here |

# | volume_name | restored-from-backup |

# +-------------+--------------------------------------+

Volume Transfer antar Project

Kalau ingin pindahkan volume dari satu project ke project lain:

# Di project asal — buat transfer request

openstack volume transfer request create my-data-vol

# Output:

# +------------+--------------------------------------+

# | Field | Value |

# +------------+--------------------------------------+

# | auth_key | 3b16a7c8d9e0f1a2 | ← simpan ini!

# | id | bbbbcccc-dddd-eeee-ffff-111111111111 |

# | name | None |

# | volume_id | 44444444-5555-6666-7777-888888888888 |

# +------------+--------------------------------------+

# Di project tujuan — accept transfer

openstack volume transfer request accept --auth-key 3b16a7c8d9e0f1a2 bbbbcccc-dddd-eeee-ffff-111111111111

# Volume sekarang milik project tujuan

Multi-Backend Storage

Di production, biasanya ada beberapa tipe storage: SSD cepat untuk database, HDD besar untuk archive. Cinder bisa dikonfigurasi dengan multiple backend.

# /etc/cinder/cinder.conf

[DEFAULT]

enabled_backends = ssd-pool,hdd-pool

[ssd-pool]

volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

volume_group = cinder-ssd-vg

volume_backend_name = SSD_FAST

iscsi_ip_address = 10.0.0.41

iscsi_protocol = iscsi

[hdd-pool]

volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

volume_group = cinder-hdd-vg

volume_backend_name = HDD_LARGE

iscsi_ip_address = 10.0.0.41

iscsi_protocol = iscsi

# Buat volume type untuk tiap backend

openstack volume type create --property volume_backend_name=SSD_FAST ssd-fast

openstack volume type create --property volume_backend_name=HDD_LARGE hdd-large

# Buat volume di backend SSD

openstack volume create --size 50 --type ssd-fast db-volume

# Buat volume di backend HDD

openstack volume create --size 500 --type hdd-large archive-volume

# Cek volume type yang dipakai

openstack volume show db-volume | grep type

# | type | ssd-fast |

openstack volume show archive-volume | grep type

# | type | hdd-large |

Volume Extend (Resize)

# Perbesar volume dari 20GB ke 50GB

# Volume harus dalam status available (detach dulu dari VM)

openstack server remove volume my-vm-01 my-data-vol

openstack volume set --size 50 my-data-vol

# Re-attach

openstack server add volume --device /dev/vdb my-vm-01 my-data-vol

# Di dalam VM: resize filesystem

sudo resize2fs /dev/vdb

# resize2fs 1.46.5 (30-Dec-2021)

# Filesystem at /dev/vdb is mounted on /mnt/data; on-line resizing required

# old_desc_blocks = 3, new_desc_blocks = 7

# The filesystem on /dev/vdb is now 13107200 (4k) blocks long.

df -h /mnt/data

# Filesystem Size Used Avail Use% Mounted on

# /dev/vdb 50G 44M 47G 1% /mnt/data

11. Neutron Advanced — VXLAN, DVR, OVS vs OVN

Perbandingan Network Backend

| Aspek | LinuxBridge | Open vSwitch (OVS) | OVN |

|---|---|---|---|

| Kompleksitas | Rendah | Menengah | Tinggi (tapi auto-managed) |

| Performa | Baik | Sangat baik | Terbaik |

| DVR support | Tidak | Ya | Ya (native) |

| DPDK support | Tidak | Ya | Ya |

| L3 routing | Via L3 agent | Via L3 agent | Distributed native |

| Firewall | iptables | iptables/conntrack | OVN ACL (lebih cepat) |

| Scale | Kecil-menengah | Menengah-besar | Besar |

| Maintenance | Mudah | Sedang | Otomatis |

Diagnosis Network Problem

Ini hal yang paling sering ditanyakan beginner: "VM saya tidak bisa ping keluar."

# === Step 1: Cek security group VM ===

openstack server show my-vm-01 -f value -c security_groups

# [{'name': 'default'}]

openstack security group rule list default

# Pastikan ada rule ICMP egress dan ingress yang sesuai

# Tambahkan rule ICMP jika belum ada

openstack security group rule create --protocol icmp --ingress default

openstack security group rule create --protocol icmp --egress default

# === Step 2: Cek IP assignment ===

openstack server show my-vm-01 -f value -c addresses

# internal-net=10.10.0.5

# === Step 3: Cek port di Neutron ===

openstack port list --server my-vm-01

# Output:

# +--------------------------------------+------+-------------------+-----------------------------------+--------+

# | ID | Name | MAC Address | Fixed IP Addresses | Status |

# +--------------------------------------+------+-------------------+-----------------------------------+--------+

# | aabb1122-... | | fa:16:3e:ab:cd:ef | ip_address='10.10.0.5', subnet.. | ACTIVE |

# +--------------------------------------+------+-------------------+-----------------------------------+--------+

# === Step 4: Cek router sudah benar ===

openstack router show internal-router

# Pastikan:

# - external_gateway_info tidak kosong

# - interfaces ada subnet yang benar

openstack router show internal-router -f value -c external_gateway_info

# {'network_id': '11111111-2222-...', 'enable_snat': True, ...}

# === Step 5: Cek di dalam network namespace ===

# (jalankan di controller/network node sebagai root)

sudo ip netns list

# qrouter-xxxx-yyyy (ID router Neutron)

# qdhcp-zzzz-wwww (DHCP agent)

ROUTER_NS=$(sudo ip netns list | grep qrouter | awk '{print $1}')

# Cek interface dalam namespace router

sudo ip netns exec $ROUTER_NS ip addr

# Output menunjukkan interface qr-xxx (internal) dan qg-xxx (external)

# Cek routing table di namespace

sudo ip netns exec $ROUTER_NS ip route

# default via 203.0.113.1 dev qg-abcdef01-23

# 10.10.0.0/24 dev qr-12345678-90

# Ping dari namespace router ke internet

sudo ip netns exec $ROUTER_NS ping -c 3 8.8.8.8

# PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

# 64 bytes from 8.8.8.8: icmp_seq=1 ttl=118 time=1.23 ms

# Kalau ini berhasil tapi VM tidak bisa, masalahnya di security group atau DHCP

# Ping dari namespace router ke VM

sudo ip netns exec $ROUTER_NS ping -c 3 10.10.0.5

# Harus berhasil

# === Step 6: Cek IP forwarding di host ===

sysctl net.ipv4.ip_forward

# net.ipv4.ip_forward = 1 ← harus 1

# Kalau 0, aktifkan:

sudo sysctl -w net.ipv4.ip_forward=1

echo "net.ipv4.ip_forward=1" | sudo tee -a /etc/sysctl.conf

# === Step 7: Cek bridge mapping (LinuxBridge) ===

cat /etc/neutron/plugins/ml2/linuxbridge_agent.ini | grep physical_interface

# physical_interface_mappings = provider:eth0

# Pastikan interface nama-nya benar

# Check bridge

brctl show

# bridge name bridge id STP enabled interfaces

# brq77777777 8000.xxxxxxxxxxxx no eth0

# vxlan-100

# tap-xxxxxxxx

DVR (Distributed Virtual Router)

Tanpa DVR, semua traffic East-West (antar VM di compute node berbeda) dan North-South (VM ke internet) harus melewati network node. Ini jadi bottleneck.

Dengan DVR, setiap compute node punya router namespace sendiri, sehingga traffic antar VM dan floating IP traffic langsung di-handle di compute node tanpa melewati network node.

# Aktifkan DVR di /etc/neutron/neutron.conf (controller)

[DEFAULT]

router_distributed = True

# Di /etc/neutron/l3_agent.ini (semua node)

[DEFAULT]

agent_mode = dvr_snat # di network/controller node

# agent_mode = dvr # di compute node

# Buat router dengan DVR enabled

openstack router create --distributed internal-router-dvr

# Verifikasi

openstack router show internal-router-dvr | grep distributed

# | distributed | True |

VXLAN Deep Dive

VXLAN (Virtual Extensible LAN) mengenkapsulasi frame Ethernet L2 di dalam paket UDP/IP. Ini memungkinkan network virtual yang terisolasi berjalan di atas network fisik yang sama.

VM1 (10.10.0.5) ──► [OVS Bridge] ──► VXLAN Tunnel ──► [OVS Bridge] ──► VM2 (10.10.0.8)

compute1 UDP:4789 compute2

# Cek VXLAN tunnel yang aktif (di compute node)

sudo ovs-vsctl show

# Bridge br-int

# Port "vxlan-0a000014"

# Interface "vxlan-0a000014"

# type: vxlan

# options: {df_default="true", in_key=flow, local_ip="10.0.0.31",

# out_key=flow, remote_ip="10.0.0.11"}

# Port "tap-12345678"

# tag: 1

# Interface "tap-12345678"

# Cek traffic VXLAN (UDP port 4789)

sudo tcpdump -i eth0 -n port 4789 -c 10

# 09:15:01.123456 IP 10.0.0.31.44321 > 10.0.0.11.4789: VXLAN, flags [I] (0x08)

# 09:15:01.123789 IP 10.0.0.11.55432 > 10.0.0.31.4789: VXLAN, flags [I] (0x08)

12. Nova Advanced — Live Migration, Resize, Evacuation

Live Migration

Pindahkan VM dari satu compute node ke compute node lain tanpa downtime. Berguna saat maintenance hardware.

Syarat live migration:

- Shared storage (NFS atau Ceph) atau block migration (copy disk lewat network)

- Libvirt bisa berkomunikasi antar compute node

- CPU model compatible antar compute node

# Cek VM sedang jalan di compute node mana

openstack server show my-vm-01 -f value -c OS-EXT-SRV-ATTR:host

# compute1

# Live migrate ke compute2

openstack server migrate --live compute2 my-vm-01

# Monitor progress

watch -n 1 "openstack server show my-vm-01 -f value -c status -c OS-EXT-STS:task_state"

# Output selama migrasi:

# ACTIVE

# migrating

# Setelah selesai:

# ACTIVE

# None

# Verifikasi pindah

openstack server show my-vm-01 -f value -c OS-EXT-SRV-ATTR:host

# compute2

# VM tetap accessible selama proses migrasi — downtime minimal (biasanya < 1 detik)

ping 203.0.113.10

# tidak ada packet loss (atau sangat minimal)

Konfigurasi Live Migration

# Di SEMUA compute node, edit libvirtd

sudo tee /etc/libvirt/libvirtd.conf << 'EOF'

listen_tls = 0

listen_tcp = 1

tcp_port = "16509"

listen_addr = "0.0.0.0"

auth_tcp = "none"

EOF

# Edit default libvirt daemon

sudo sed -i 's/#LIBVIRTD_ARGS="--listen"/LIBVIRTD_ARGS="--listen"/' /etc/default/libvirtd

sudo systemctl restart libvirtd

# Di nova.conf semua compute node

# [libvirt]

# live_migration_uri = qemu+tcp://%s/system

# live_migration_tunnelled = false

Block Migration (tanpa shared storage)

# Kalau tidak ada shared storage, pakai block migration

# Disk di-copy lewat network — lebih lambat tapi tidak butuh NFS/Ceph

openstack server migrate --live compute2 --block-migration my-vm-01

VM Resize (Ubah Flavor)

# Resize dari m1.small ke m1.medium

openstack server resize --flavor m1.medium my-vm-01

# VM akan di-shutdown, disk di-resize, lalu boot ulang di compute node baru

# Status berubah: ACTIVE → RESIZE → VERIFY_RESIZE

# Monitor

watch -n 2 "openstack server show my-vm-01 -f value -c status"

# Setelah VERIFY_RESIZE, konfirmasi atau revert:

# Konfirmasi resize (data baru dipertahankan)

openstack server resize confirm my-vm-01

# Atau revert ke flavor lama

# openstack server resize revert my-vm-01

# Cek flavor baru

openstack server show my-vm-01 -f value -c flavor

# m1.medium

Evacuation (Compute Node Down)

Kalau compute node crash atau tidak bisa diperbaiki, VM bisa di-evacuate ke node lain. Berbeda dengan live migration — VM di sini dalam keadaan down.

# Mark compute node sebagai down

openstack compute service set --disable --disable-reason "Hardware failure" compute1 nova-compute

# Evacuate semua VM dari compute1

nova host-evacuate compute1

# Atau evacuate satu VM

openstack server evacuate my-vm-01 --host compute2

# Output:

# +----------------------------+--------------------------------------------------+

# | Field | Value |

# +----------------------------+--------------------------------------------------+

# | server_uuid | abc12345-def6-7890-abcd-ef0123456789 |

# | adminPass | xyzNewPassword123 |

# +----------------------------+--------------------------------------------------+

# VM akan di-rebuild di compute2 dari image yang sama

# Data di Cinder volume tetap aman — hanya root disk yang di-rebuild

# Setelah compute1 diperbaiki, enable kembali

openstack compute service set --enable compute1 nova-compute

Shelve dan Unshelve

Shelve = "simpan" VM ke Glance sebagai snapshot, lalu deallocate resource. Berguna untuk VM yang tidak terpakai tapi perlu disimpan state-nya.

# Shelve VM — resources dilepas, disk di-snapshot ke Glance

openstack server shelve my-vm-01

# Status: ACTIVE → SHELVED_OFFLOADED

# Resource compute di-free

# Unshelve — boot kembali dari snapshot

openstack server unshelve my-vm-01

# Status: SHELVED_OFFLOADED → ACTIVE

13. Kolla-Ansible — Deploy Production OpenStack dengan Container

Kolla-Ansible adalah cara yang direkomendasikan untuk deploy OpenStack di production saat ini. Setiap service OpenStack berjalan dalam Docker container, di-manage oleh Ansible playbook. Keunggulan: upgrade lebih bersih (ganti container image, bukan update paket), rollback lebih mudah, dan isolasi antar service lebih baik.

Persiapan

Minimal butuh 2 server (bisa 1 untuk all-in-one):

| Node | Spesifikasi | IP |

|---|---|---|

| deploy | Tempat jalankan ansible (bisa laptop) | 10.0.0.5 |

| controller | Controller + Compute + Storage | 10.0.0.11 |

| compute1 (opsional) | Compute tambahan | 10.0.0.31 |

# ======= Di deploy node =======

# Install dependensi

sudo apt-get update

sudo apt-get install -y python3-dev python3-pip python3-venv libffi-dev gcc libssl-dev git

# Buat virtualenv

python3 -m venv ~/kolla-venv

source ~/kolla-venv/bin/activate

# Install kolla-ansible (sesuaikan versi OpenStack)

pip install 'ansible-core>=2.14,<2.16'

pip install kolla-ansible

# Buat direktori konfigurasi

sudo mkdir -p /etc/kolla

sudo chown $USER:$USER /etc/kolla

# Copy template konfigurasi

cp -r ~/kolla-venv/share/kolla-ansible/etc_examples/kolla/* /etc/kolla/

cp ~/kolla-venv/share/kolla-ansible/ansible/inventory/all-in-one .

cp ~/kolla-venv/share/kolla-ansible/ansible/inventory/multinode .

# Install Ansible Galaxy requirements

kolla-ansible install-deps

Konfigurasi

# Edit /etc/kolla/globals.yml — ini file konfigurasi utama Kolla

cat > /etc/kolla/globals.yml << 'GLOBALS'

---

# Base options

kolla_base_distro: "ubuntu"

kolla_install_type: "source"

openstack_release: "2024.1"

# Networking

kolla_internal_vip_address: "10.0.0.100"

network_interface: "eth0"

neutron_external_interface: "eth1"

neutron_plugin_agent: "openvswitch"

# Aktifkan service

enable_cinder: "yes"

enable_cinder_backend_lvm: "yes"

enable_horizon: "yes"

enable_heat: "yes"

enable_neutron_provider_networks: "yes"

# Cinder LVM

cinder_volume_group: "cinder-volumes"

# Docker

docker_registry: "quay.io"

docker_namespace: "openstack.kolla"

# Logging

enable_central_logging: "yes"

GLOBALS

# Edit inventory file (untuk all-in-one)

# File all-in-one sudah benar untuk single node

# Untuk multi-node, edit file multinode:

cat > multinode << 'INVENTORY'

[control]

controller ansible_host=10.0.0.11 ansible_user=ubuntu

[network]

controller ansible_host=10.0.0.11 ansible_user=ubuntu

[compute]

compute1 ansible_host=10.0.0.31 ansible_user=ubuntu

[monitoring]

controller ansible_host=10.0.0.11 ansible_user=ubuntu

[storage]

controller ansible_host=10.0.0.11 ansible_user=ubuntu

[deployment]

localhost ansible_connection=local

INVENTORY

# Generate password untuk semua service

kolla-genpwd

# Lihat password yang di-generate

cat /etc/kolla/passwords.yml | head -20

# keystone_admin_password: xyzABC123def

# database_password: abc123XYZ789

# rabbitmq_password: ...

Deploy

# Step 1: Bootstrap target server (install Docker, setup OS)

kolla-ansible -i multinode bootstrap-servers

# Output (ringkasan):

# PLAY [Apply role baremetal] ****

#

# TASK [baremetal : Install docker packages] ****

# changed: [controller]

# changed: [compute1]

#

# TASK [baremetal : Enable docker service] ****

# ok: [controller]

# ok: [compute1]

#

# PLAY RECAP ****

# controller : ok=45 changed=20 failed=0

# compute1 : ok=35 changed=15 failed=0

# Step 2: Pre-check — validasi semua requirements

kolla-ansible -i multinode prechecks

# Output:

# PLAY [Apply role prechecks] ****

#

# TASK [prechecks : Checking Docker version] ****

# ok: [controller]

# ok: [compute1]

#

# TASK [prechecks : Checking free port for Keystone] ****

# ok: [controller]

#

# PLAY RECAP ****

# controller : ok=85 changed=0 failed=0

# compute1 : ok=40 changed=0 failed=0

# Step 3: Pull Docker images

kolla-ansible -i multinode pull

# Output (butuh waktu lama, download semua images):

# TASK [Pull kolla/ubuntu-source-keystone image] ****

# changed: [controller]

#

# TASK [Pull kolla/ubuntu-source-nova-api image] ****

# changed: [controller]

# ...

# Step 4: Deploy!

kolla-ansible -i multinode deploy

# Output:

# PLAY [Apply role keystone] ****

#

# TASK [keystone : Starting Keystone container] ****

# changed: [controller]

#

# TASK [keystone : Waiting for Keystone to become available] ****

# ok: [controller]

#

# PLAY [Apply role nova] ****

#

# TASK [nova : Starting nova-api container] ****

# changed: [controller]

#

# TASK [nova : Starting nova-compute container] ****

# changed: [compute1]

# ...

#

# PLAY RECAP ****

# controller : ok=250 changed=85 failed=0

# compute1 : ok=80 changed=35 failed=0

# Step 5: Post-deploy — buat endpoint dan admin user

kolla-ansible -i multinode post-deploy

# File credential akan dibuat:

# /etc/kolla/admin-openrc.sh

# Load credential

source /etc/kolla/admin-openrc.sh

# Verifikasi

openstack service list

# +----------------------------------+----------+-----------+

# | ID | Name | Type |

# +----------------------------------+----------+-----------+

# | ... | keystone | identity |

# | ... | nova | compute |

# | ... | neutron | network |

# | ... | glance | image |

# | ... | cinder | volume |

# | ... | cinderv3 | volumev3 |

# | ... | heat | orches.. |

# +----------------------------------+----------+-----------+

# Cek container Docker yang jalan

sudo docker ps --format "table {{.Names}}\t{{.Status}}" | head -20

# NAMES STATUS

# keystone Up 10 minutes

# nova_api Up 8 minutes

# nova_scheduler Up 8 minutes

# nova_conductor Up 8 minutes

# nova_novncproxy Up 8 minutes

# nova_compute Up 7 minutes

# neutron_server Up 7 minutes

# neutron_openvswitch_agent Up 7 minutes

# neutron_l3_agent Up 7 minutes

# neutron_dhcp_agent Up 7 minutes

# neutron_metadata_agent Up 7 minutes

# glance_api Up 9 minutes

# cinder_api Up 6 minutes

# cinder_scheduler Up 6 minutes

# cinder_volume Up 6 minutes

# horizon Up 5 minutes

# rabbitmq Up 12 minutes

# mariadb Up 12 minutes

# memcached Up 12 minutes

Upgrade OpenStack dengan Kolla

# Ganti versi di globals.yml

# openstack_release: "2024.2" (dari 2024.1)

# Pull image baru

kolla-ansible -i multinode pull

# Upgrade! (rolling per-service)

kolla-ansible -i multinode upgrade

# Output:

# TASK [keystone : Stopping keystone container] ****

# changed: [controller]

#

# TASK [keystone : Starting keystone container with new image] ****

# changed: [controller]

#

# TASK [keystone : Running keystone db_sync] ****

# changed: [controller]

# ...

# (lanjut ke service berikutnya)

# Verifikasi versi

openstack versions show

14. Integrasi Ceph sebagai Backend Storage

Ceph adalah distributed storage system yang bisa menyediakan block storage (RBD), object storage (RADOS Gateway), dan file storage (CephFS) dalam satu cluster. Mayoritas deployment OpenStack production menggunakan Ceph sebagai backend untuk Glance, Cinder, dan Nova ephemeral disk.

Kenapa Ceph untuk OpenStack?

| Aspek | LVM (Default) | Ceph |

|---|---|---|

| Replication | Tidak ada (single disk) | 3x replica otomatis |

| Scale | Vertikal (tambah disk ke server) | Horizontal (tambah server) |

| Live migration support | Butuh NFS atau copy via network | Native (shared RBD) |

| Snapshot | CoW snapshot lokal | Distribusi snapshot |

| Boot from volume | Ya | Ya, lebih cepat (clone) |

| Single point of failure | Ada (disk/server mati = data hilang) | Tidak ada |

Arsitektur Ceph Cluster

┌─────────────────┐

│ Ceph Monitor │ (minimal 3, odd number)

│ (ceph-mon) │ tracking cluster state

└────────┬────────┘

│

┌──────────────────┼──────────────────┐

│ │ │

┌──────▼──────┐ ┌──────▼──────┐ ┌──────▼──────┐

│ OSD 1 │ │ OSD 2 │ │ OSD 3 │

│ /dev/sdb │ │ /dev/sdb │ │ /dev/sdb │

│ /dev/sdc │ │ /dev/sdc │ │ /dev/sdc │

│ ceph-osd │ │ ceph-osd │ │ ceph-osd │

└─────────────┘ └─────────────┘ └─────────────┘

OSD = Object Storage Daemon. Setiap disk fisik punya satu OSD. Data didistribusikan ke semua OSD berdasarkan algoritma CRUSH.

Install Ceph Cluster (3 Node)

| Node | Role | Disk |

|---|---|---|

| ceph1 (10.0.0.51) | mon, mgr, osd | /dev/sdb, /dev/sdc |

| ceph2 (10.0.0.52) | mon, osd | /dev/sdb, /dev/sdc |

| ceph3 (10.0.0.53) | mon, osd | /dev/sdb, /dev/sdc |

# ======= Di ceph1 (deploy node) =======

# Install cephadm

curl --silent --remote-name --location https://github.com/ceph/ceph/raw/reef/src/cephadm/cephadm

chmod +x cephadm

sudo mv cephadm /usr/local/bin/

# Bootstrap cluster

sudo cephadm bootstrap \

--mon-ip 10.0.0.51 \

--initial-dashboard-password CephAdmin123 \

--dashboard-password-noupdate

# Output:

# Ceph Dashboard is now available at:

# URL: https://ceph1:8443/

# User: admin

# Password: CephAdmin123

#

# Enabling client.admin keyring and conf on hosts with "admin" label

# Saving cluster configuration to /var/lib/ceph/<fsid>/config

# Enabling autotune for osd_memory_target

# You can access the Ceph CLI as following in case of multi-cluster:

# sudo /usr/sbin/cephadm shell --fsid <fsid> -c /etc/ceph/ceph.conf

# Or, if you are only running a single cluster:

# sudo /usr/sbin/cephadm shell

# Please consider enabling telemetry to help improve Ceph:

# ceph telemetry on

# Install ceph-common untuk CLI

sudo cephadm install ceph-common

# Cek status cluster

sudo ceph -s

# Output:

# cluster:

# id: a1b2c3d4-e5f6-7890-abcd-ef0123456789

# health: HEALTH_WARN

# OSD count 0 < osd_pool_default_size 3

#

# services:

# mon: 1 daemons, quorum ceph1

# mgr: ceph1.abcdef(active)

#

# data:

# pools: 0 pools, 0 pgs

# objects: 0 objects, 0 B

# usage: 0 B used, 0 B / 0 B avail

# pgs:

# Tambahkan node lain ke cluster

sudo ceph orch host add ceph2 10.0.0.52

sudo ceph orch host add ceph3 10.0.0.53

# Output:

# Added host 'ceph2' with addr '10.0.0.52'

# Added host 'ceph3' with addr '10.0.0.53'

# Deploy monitor ke semua node

sudo ceph orch apply mon --placement="ceph1,ceph2,ceph3"

# Tambahkan semua available disk sebagai OSD

sudo ceph orch apply osd --all-available-devices

# Atau tambahkan disk spesifik

sudo ceph orch daemon add osd ceph1:/dev/sdb

sudo ceph orch daemon add osd ceph1:/dev/sdc

sudo ceph orch daemon add osd ceph2:/dev/sdb

sudo ceph orch daemon add osd ceph2:/dev/sdc

sudo ceph orch daemon add osd ceph3:/dev/sdb

sudo ceph orch daemon add osd ceph3:/dev/sdc

# Cek OSD status

sudo ceph osd tree

# Output:

# ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

# -1 5.45679 root default

# -3 1.81893 host ceph1

# 0 hdd 0.90947 osd.0 up 1.00000 1.00000

# 1 hdd 0.90947 osd.1 up 1.00000 1.00000

# -5 1.81893 host ceph2

# 2 hdd 0.90947 osd.2 up 1.00000 1.00000

# 3 hdd 0.90947 osd.3 up 1.00000 1.00000

# -7 1.81893 host ceph3

# 4 hdd 0.90947 osd.4 up 1.00000 1.00000

# 5 hdd 0.90947 osd.5 up 1.00000 1.00000

# Cek cluster health

sudo ceph -s

# Output (healthy cluster):

# cluster:

# id: a1b2c3d4-e5f6-7890-abcd-ef0123456789

# health: HEALTH_OK

#

# services:

# mon: 3 daemons, quorum ceph1,ceph2,ceph3

# mgr: ceph1.abcdef(active), standbys: ceph2.ghijkl

# osd: 6 osds: 6 up, 6 in

#

# data:

# pools: 1 pools, 1 pgs

# objects: 0 objects, 0 B

# usage: 6.1 GiB used, 5.4 TiB / 5.5 TiB avail

# pgs: 1 active+clean

Konfigurasi Ceph Pool untuk OpenStack

# Buat pool untuk tiap service OpenStack

sudo ceph osd pool create volumes 128 # Cinder

sudo ceph osd pool create images 128 # Glance

sudo ceph osd pool create vms 128 # Nova ephemeral

sudo ceph osd pool create backups 128 # Cinder backup

# Init pool untuk RBD

sudo rbd pool init volumes

sudo rbd pool init images

sudo rbd pool init vms

sudo rbd pool init backups

# Buat ceph user untuk OpenStack

sudo ceph auth get-or-create client.glance \

mon 'profile rbd' \

osd 'profile rbd pool=images' \

mgr 'profile rbd pool=images'

sudo ceph auth get-or-create client.cinder \

mon 'profile rbd' \

osd 'profile rbd pool=volumes, profile rbd pool=vms, profile rbd pool=images' \

mgr 'profile rbd pool=volumes, profile rbd pool=vms, profile rbd pool=images'

sudo ceph auth get-or-create client.cinder-backup \

mon 'profile rbd' \

osd 'profile rbd pool=backups' \

mgr 'profile rbd pool=backups'

# Output contoh:

# [client.glance]

# key = AQDjxZ1jKl8BOBAAv...==

# Copy keyring ke OpenStack controller/compute node

sudo ceph auth get-or-create client.glance | \

ssh controller sudo tee /etc/ceph/ceph.client.glance.keyring

sudo ceph auth get-or-create client.cinder | \

ssh controller sudo tee /etc/ceph/ceph.client.cinder.keyring

sudo ceph auth get-or-create client.cinder | \

ssh compute1 sudo tee /etc/ceph/ceph.client.cinder.keyring

# Copy ceph.conf ke semua OpenStack node

sudo scp /etc/ceph/ceph.conf controller:/etc/ceph/

sudo scp /etc/ceph/ceph.conf compute1:/etc/ceph/

Konfigurasi Glance dengan Ceph

# /etc/glance/glance-api.conf (di controller)

[DEFAULT]

show_image_direct_url = True

[glance_store]

stores = rbd

default_store = rbd

rbd_store_pool = images

rbd_store_user = glance

rbd_store_ceph_conf = /etc/ceph/ceph.conf

rbd_store_chunk_size = 8

sudo systemctl restart glance-api

# Upload image — otomatis masuk ke Ceph pool 'images'

openstack image create \

--disk-format qcow2 \

--container-format bare \

--file /tmp/ubuntu-22.04.img \

--public \

"Ubuntu 22.04 Ceph"

# Verifikasi image ada di Ceph

sudo rbd -p images ls

# f1e2d3c4-b5a6-7890-abcd-ef0123456789

sudo rbd -p images info f1e2d3c4-b5a6-7890-abcd-ef0123456789

# rbd image 'f1e2d3c4-b5a6-7890-abcd-ef0123456789':

# size 645 MiB in 81 objects

# order 23 (8 MiB objects)

# snapshot_count: 1

# id: 1a2b3c4d5e6f

# block_name_prefix: rbd_data.1a2b3c4d5e6f

# format: 2

# features: layering, exclusive-lock, object-map, fast-diff

Konfigurasi Cinder dengan Ceph

# /etc/cinder/cinder.conf (di controller)

[DEFAULT]

enabled_backends = ceph

default_volume_type = ceph

[ceph]

volume_driver = cinder.volume.drivers.rbd.RBDDriver

volume_backend_name = ceph

rbd_pool = volumes

rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_flatten_volume_from_snapshot = false

rbd_max_clone_depth = 5

rbd_store_chunk_size = 4

rados_connect_timeout = -1

rbd_user = cinder

rbd_secret_uuid = 457eb676-33da-42ec-9a8c-9293d545c337

# Generate UUID untuk libvirt secret (di setiap compute node)

UUID=457eb676-33da-42ec-9a8c-9293d545c337

# Buat secret XML

cat > /tmp/secret.xml << EOF

<secret ephemeral='no' private='no'>

<uuid>${UUID}</uuid>

<usage type='ceph'>

<name>client.cinder secret</name>

</usage>